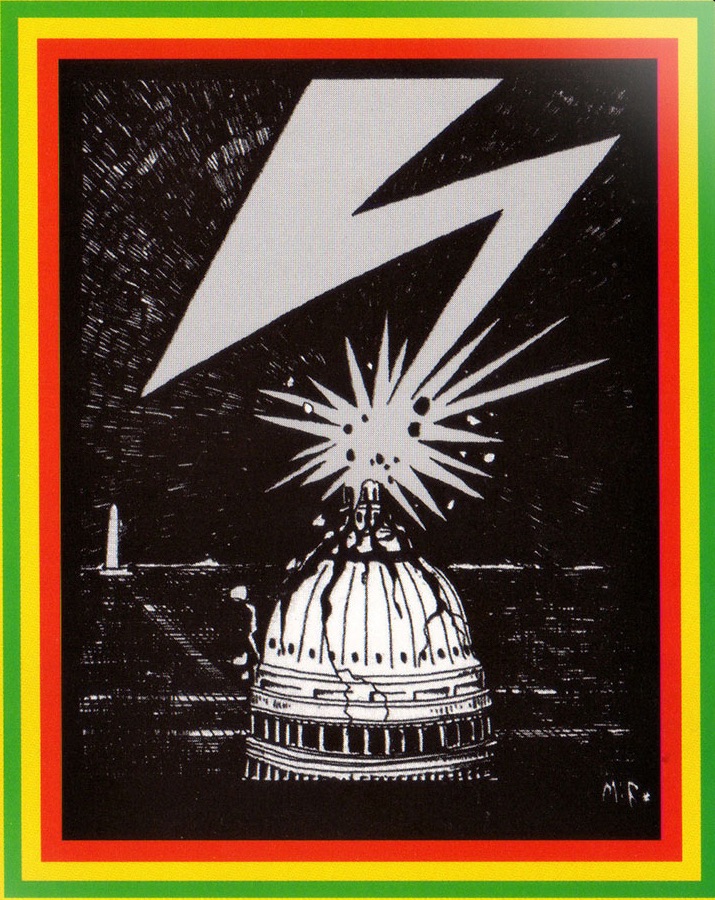

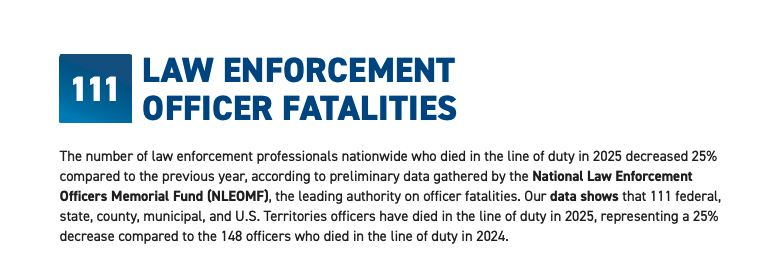

New Facebook & YouTube Rules Enable Elite Whites to Better Control the Domain of Discourse & Chill Speech

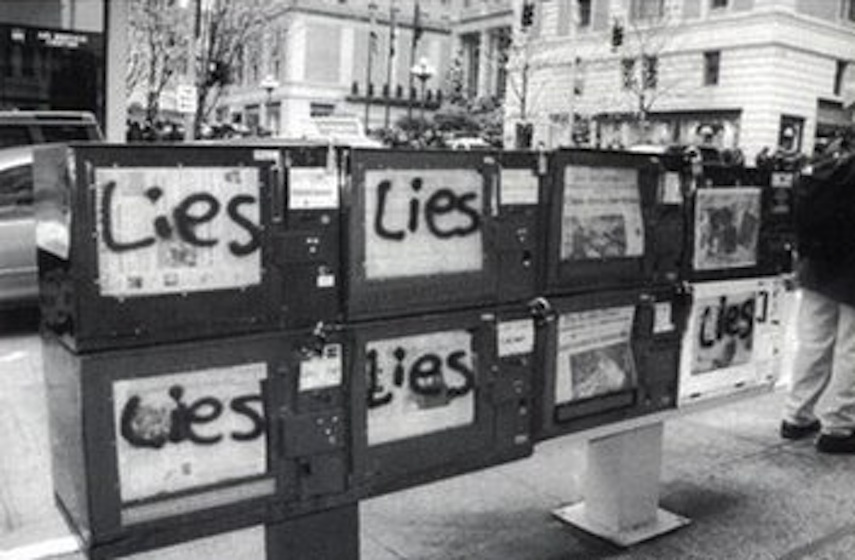

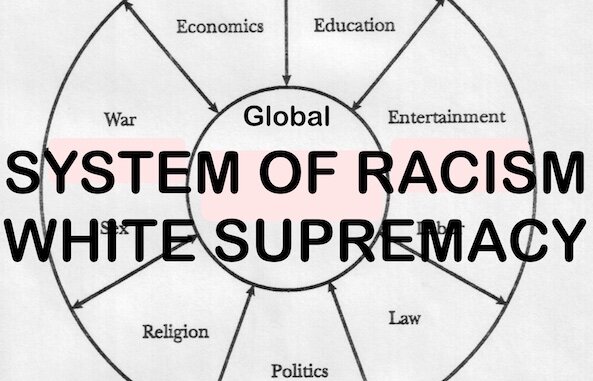

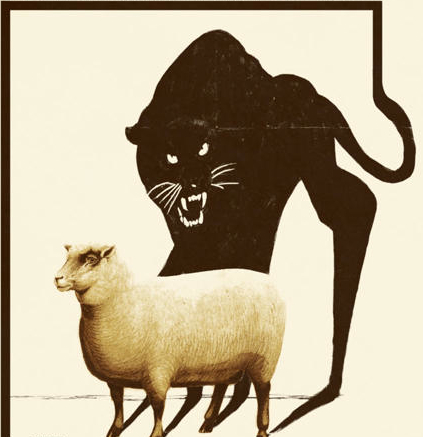

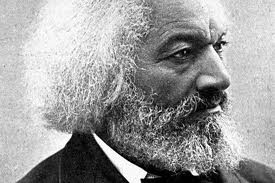

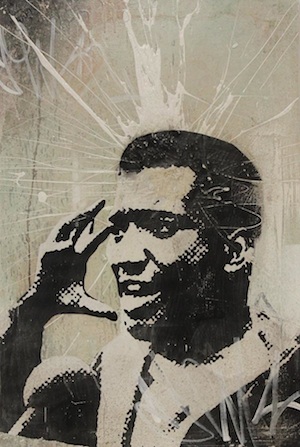

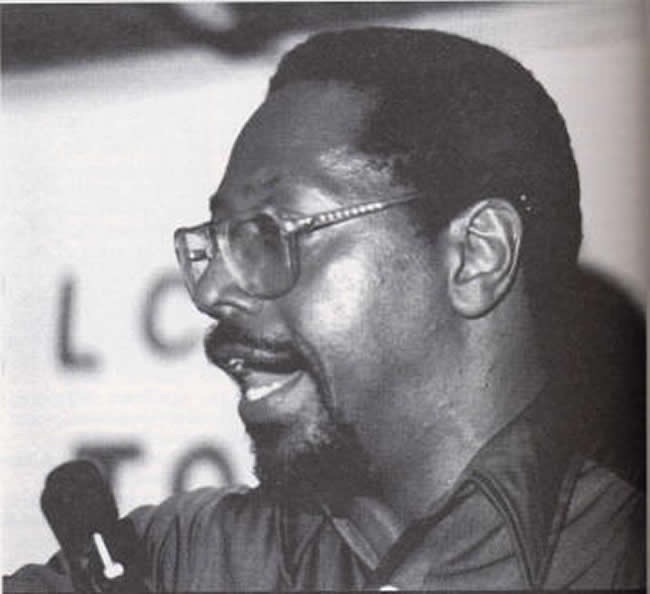

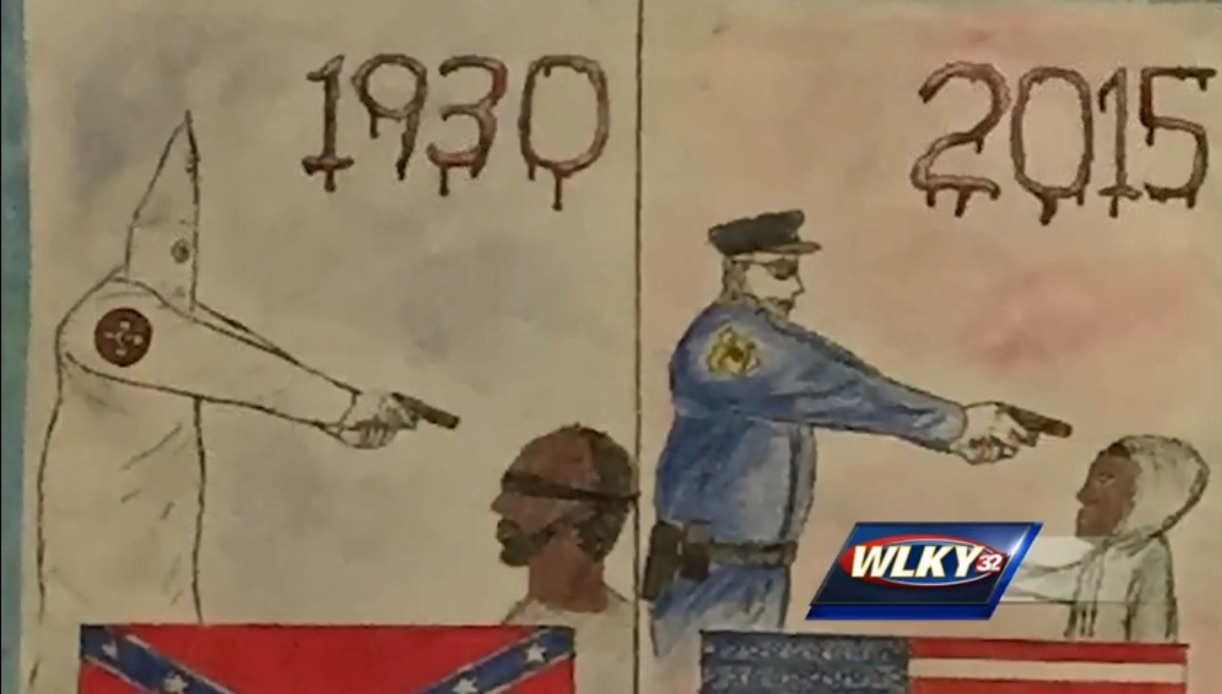

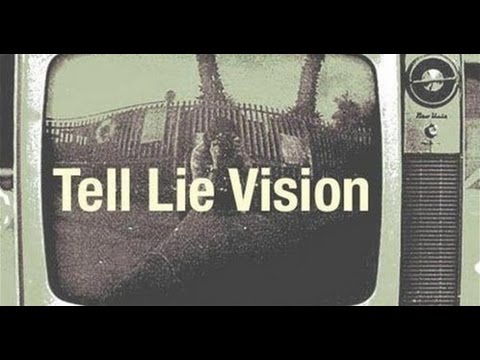

/ According to Amos Wilson, "the most effective means of disseminating and reproducing ideas in society, and in the Afrikan American community in particular, is to have that community perceive their dissemination and reproduction as the work of disinterested, unbiased, non-manipulative, liberal yet authoritative, White American individuals, groups, or institutions, or as flowing from sources independent of the marked influence of the powerful. Thus, White America strongly pushes and projects the powerful mythology of independent, liberal American media, universities, and other information processing establishments. That is, America loudly congratulates itself for what it calls its "free press" and mass media which permit the free exchange of ideas. Most Black Americans utilize White media and these factors as their primary, if not sole, source of information. Most are not mindful of the fact that the American press and mass media are privately owned, profit-making, White elite-controlled corporations. The press is one among other institutions, "and one of the most important in maintaining the hegemony of the corporate class and the capitalist system itself," advances Parenti.

According to Amos Wilson, "the most effective means of disseminating and reproducing ideas in society, and in the Afrikan American community in particular, is to have that community perceive their dissemination and reproduction as the work of disinterested, unbiased, non-manipulative, liberal yet authoritative, White American individuals, groups, or institutions, or as flowing from sources independent of the marked influence of the powerful. Thus, White America strongly pushes and projects the powerful mythology of independent, liberal American media, universities, and other information processing establishments. That is, America loudly congratulates itself for what it calls its "free press" and mass media which permit the free exchange of ideas. Most Black Americans utilize White media and these factors as their primary, if not sole, source of information. Most are not mindful of the fact that the American press and mass media are privately owned, profit-making, White elite-controlled corporations. The press is one among other institutions, "and one of the most important in maintaining the hegemony of the corporate class and the capitalist system itself," advances Parenti.

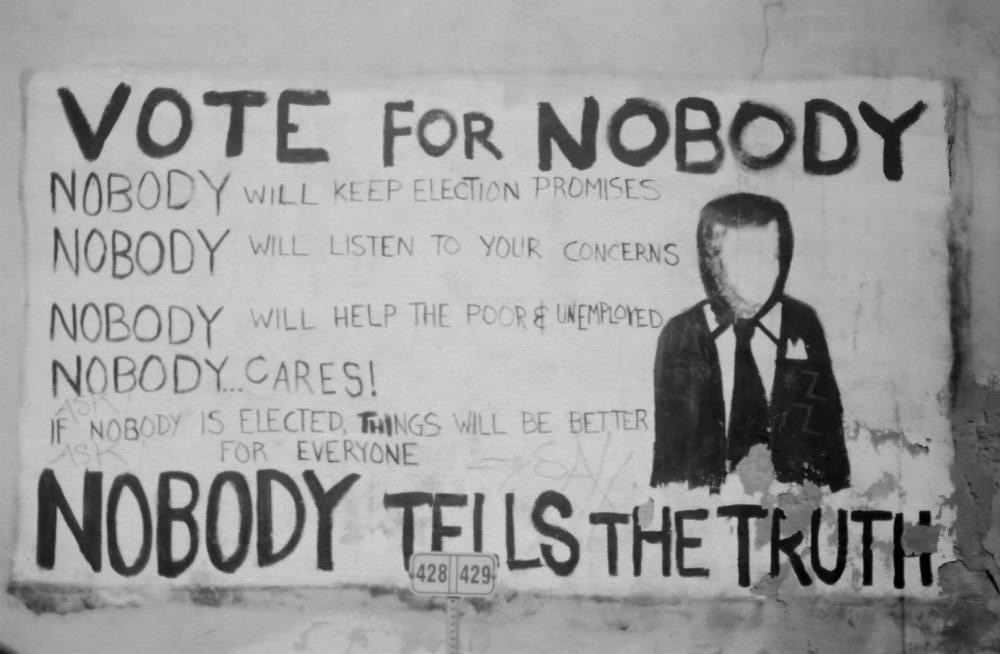

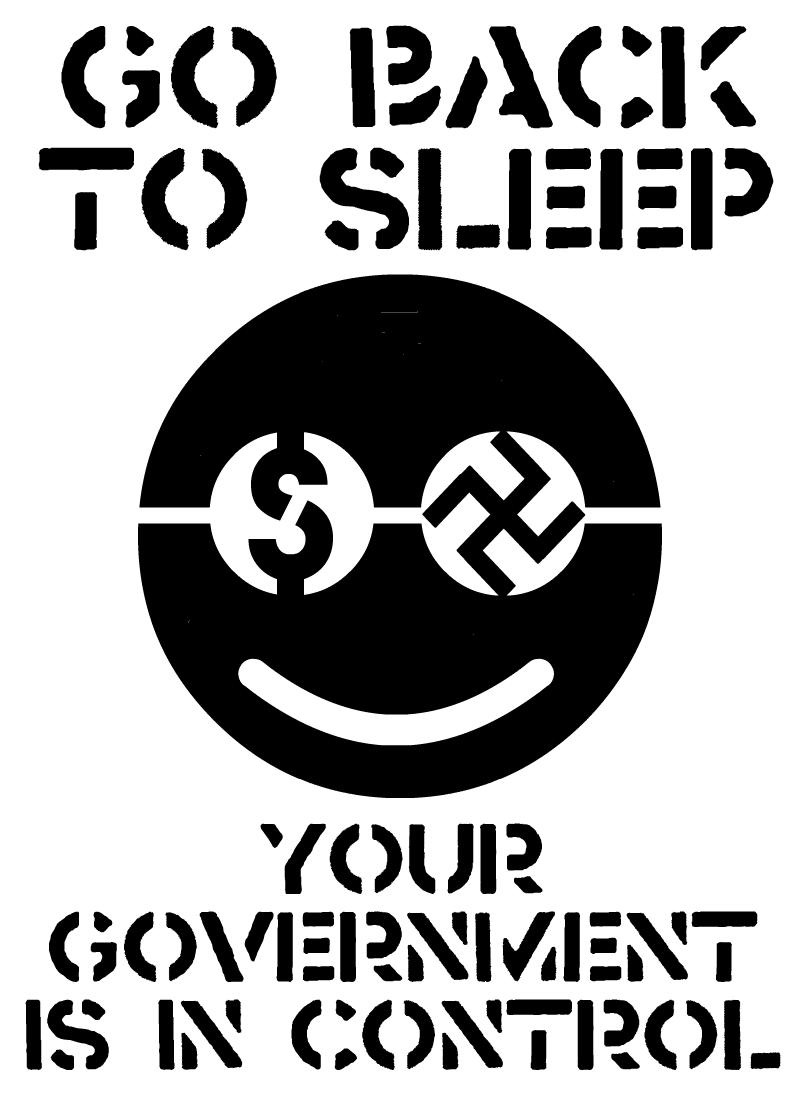

If the press cannot mold our every opinion, it can frame the perpetual reality around which our opinions take shape. Here may lie the most important effect of the news media: they set the issue agenda for the rest of us, choosing what to emphasize and what to ignore or suppress, in effect, organizing our political world for us. The media may not always be able to tell us what to think, but they are strikingly successful in telling us what to think about ....

It is enough that they create opinion, visibility, giving legitimacy to certain views and illegitimacy to others. The media do the same to substantive issues that they do to candidates, raising some from oblivion and conferring legitimacy upon them, while consigning others to limbo. This power to determine the issue agenda, the information flow, and the parameters of political debate so that it extends from ultra-right to no further than moderate center, is if not total, still totally awesome.

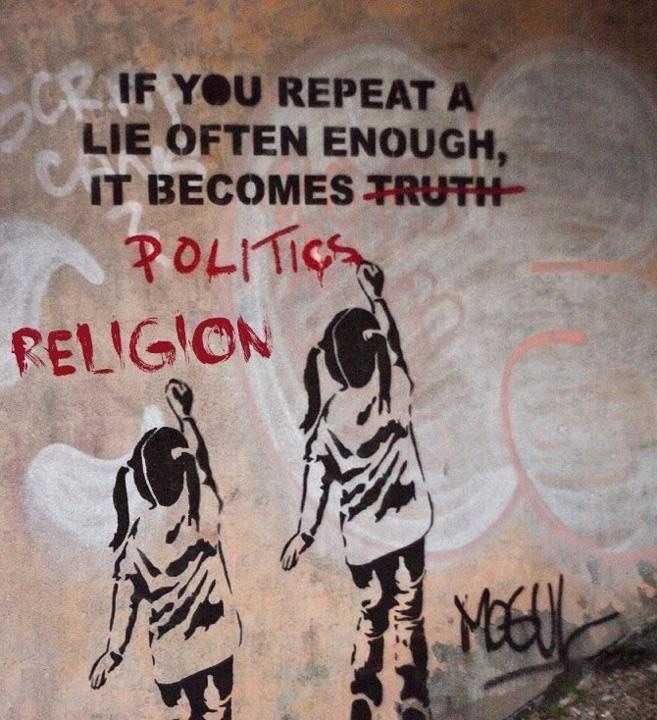

The central aim of the ruling elite's ideology process is to define the "domain of discourse." That is, the corporate elite seeks to define the limits of "acceptable ideas" and to define what is worth talking about, worth learning, teaching, promoting, and writing about. Of course, the limits of the "acceptable," the "responsible," are set at those points which support and justify the interests of the elite itself. To a great extent the elite ideology process essentially involves the reinforcement of long-held, orthodox "American" values, perspectives, practices and ideals (which the system of power relations has already indirectly shaped to begin with). These factors are the ideological bases of elite power. It is a well-known fact that propaganda works best "when used to reinforce an already existing notion or to establish a logical or emotional connection between a new idea and a social norm." [MORE]

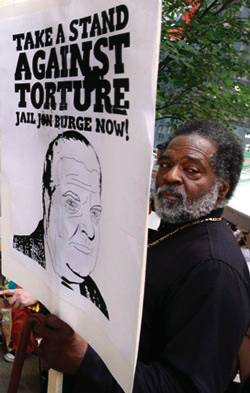

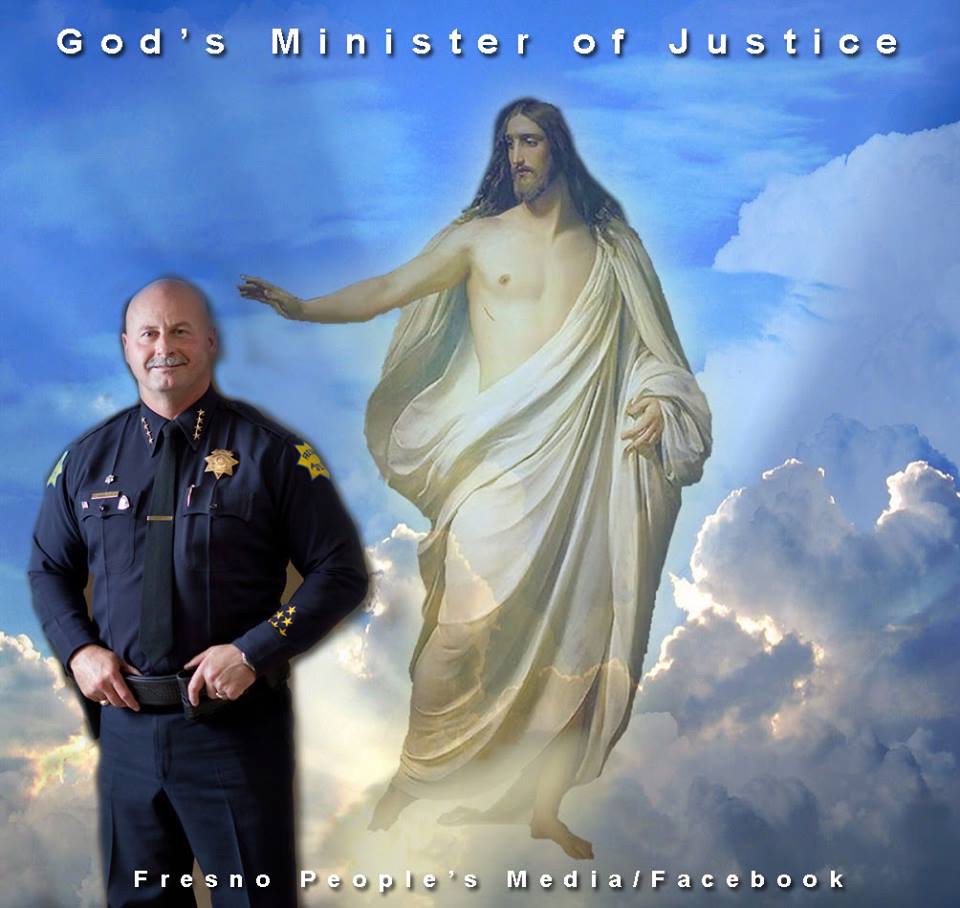

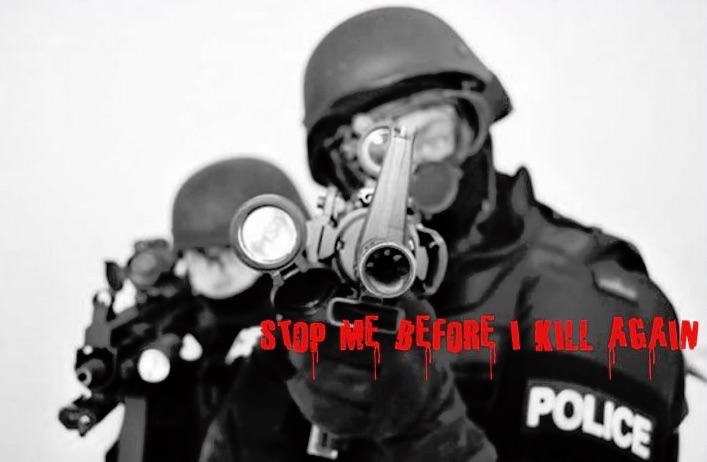

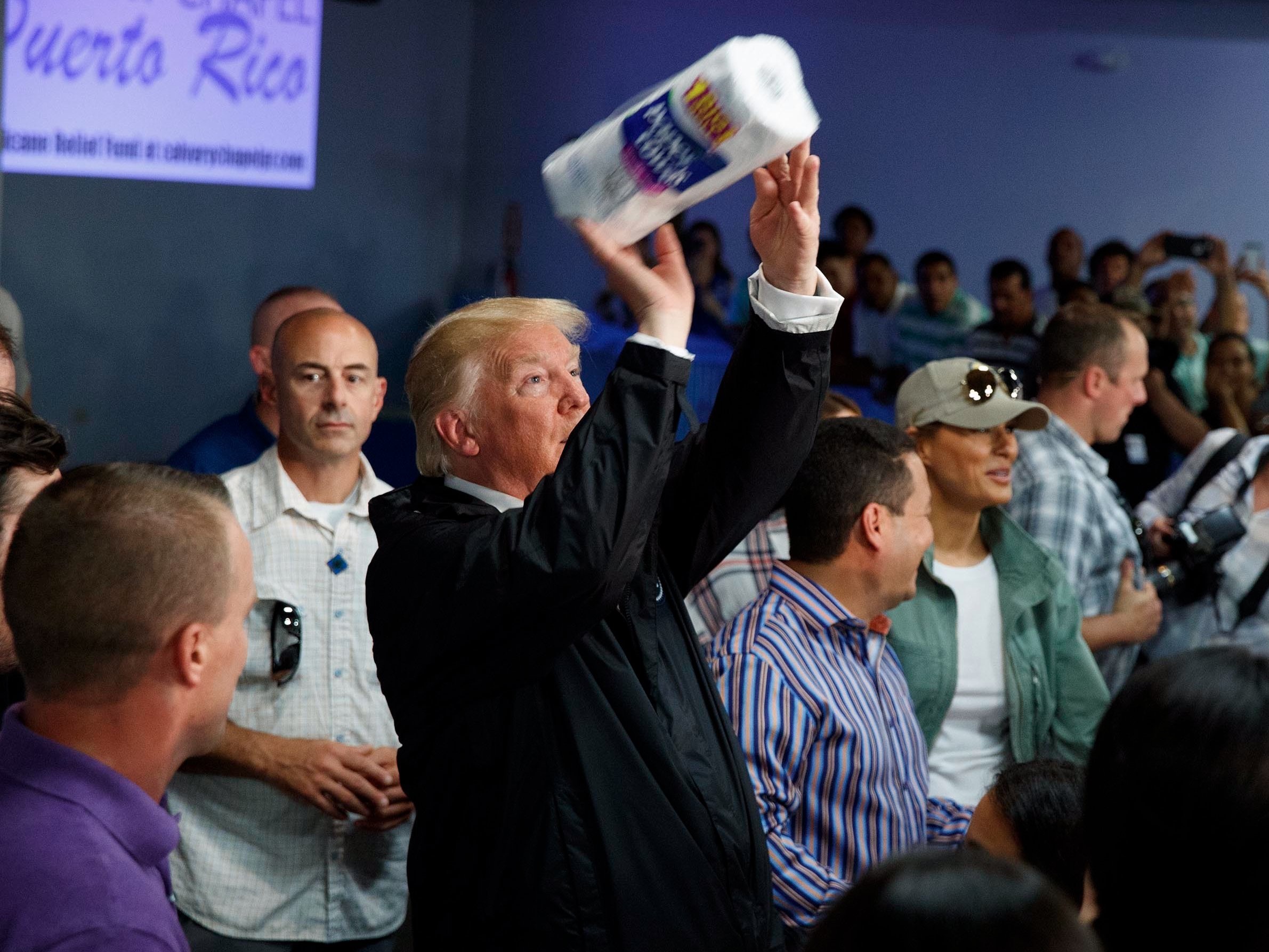

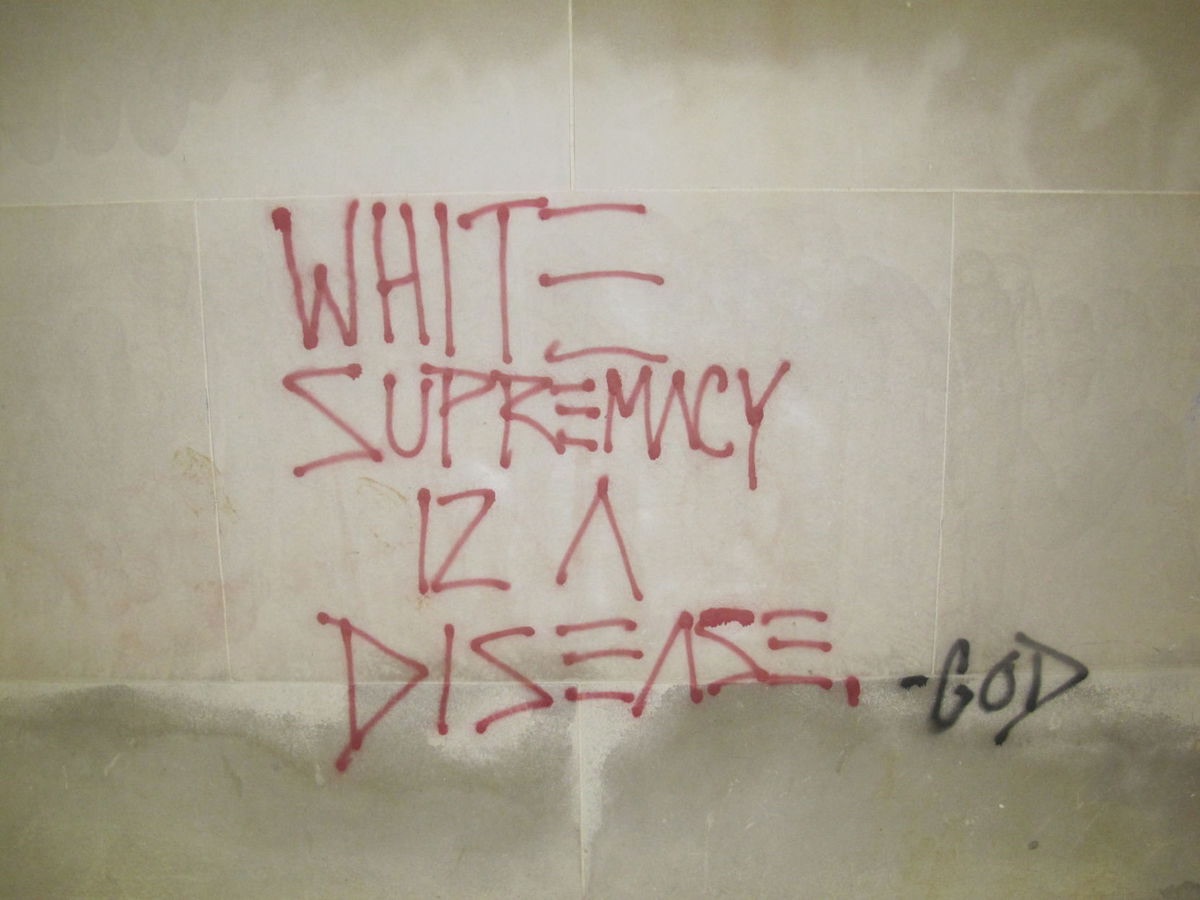

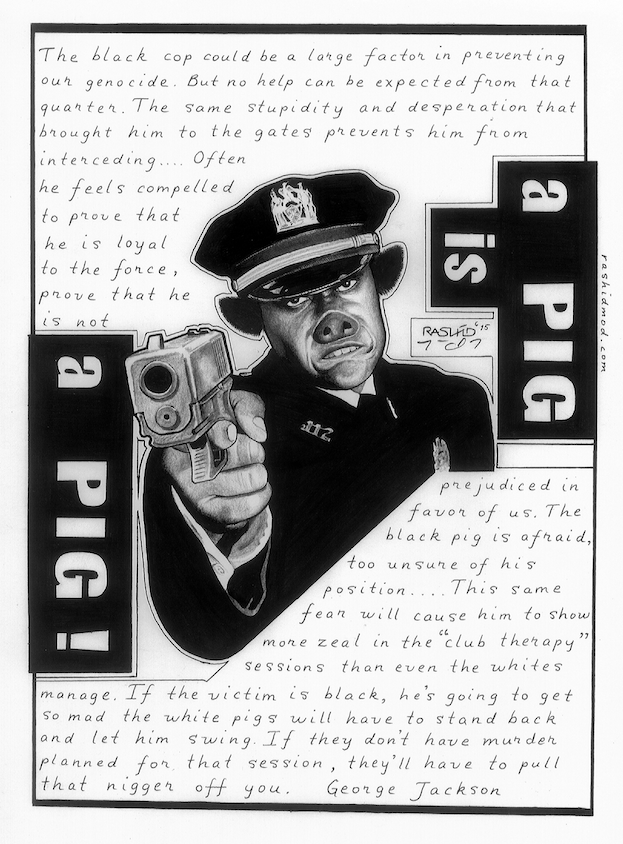

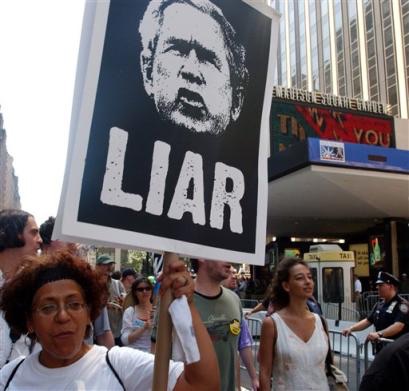

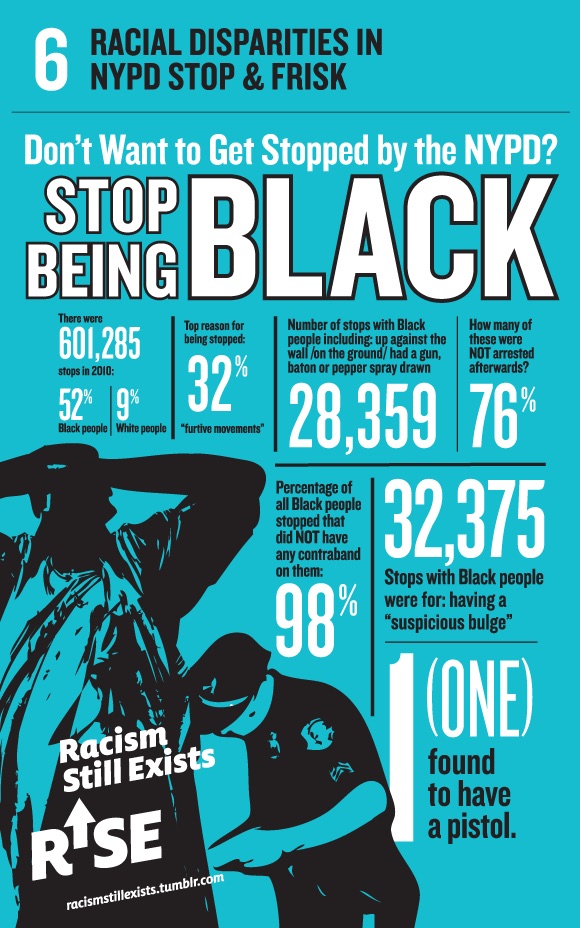

Facebook’s Secret Censorship Rules Protect White Men From Hate Speech But Not Black Children. From [MintPress] In the wake of a terrorist attack in London earlier this month, a U.S. congressman wrote a Facebook post in which he called for the slaughter of “radicalized” Muslims. “Hunt them, identify them, and kill them,” declared U.S. Rep. Clay Higgins, a Louisiana Republican. “Kill them all. For the sake of all that is good and righteous. Kill them all.”

Higgins’ plea for violent revenge went untouched by Facebook workers who scour the social network deleting offensive speech.

But a May posting on Facebook by Boston poet and Black Lives Matter activist Didi Delgado drew a different response.

“All white people are racist. Start from this reference point, or you’ve already failed,” Delgado wrote. The post was removed and her Facebook account was disabled for seven days.

A trove of internal documents reviewed by ProPublica sheds new light on the secret guidelines that Facebook’s censors use to distinguish between hate speech and legitimate political expression. The documents reveal the rationale behind seemingly inconsistent decisions. For instance, Higgins’ incitement to violence passed muster because it targeted a specific sub-group of Muslims — those that are “radicalized” — while Delgado’s post was deleted for attacking whites in general.

Over the past decade, the company has developed hundreds of rules, drawing elaborate distinctions between what should and shouldn’t be allowed, in an effort to make the site a safe place for its nearly 2 billion users. The issue of how Facebook monitors this content has become increasingly prominent in recent months, with the rise of “fake news” — fabricated stories that circulated on Facebook like “Pope Francis Shocks the World, Endorses Donald Trump For President, Releases Statement” — and growing concern that terrorists are using social media for recruitment.

While Facebook was credited during the 2010-2011 “Arab Spring” with facilitating uprisings against authoritarian regimes, the documents suggest that, at least in some instances, the company’s hate-speech rules tend to favor elites and governments over grassroots activists and racial minorities. In so doing, they serve the business interests of the global company, which relies on national governments not to block its service to their citizens.

One Facebook rule, which is cited in the documents but that the company said is no longer in effect, banned posts that praise the use of “violence to resist occupation of an internationally recognized state.” The company’s workforce of human censors, known as content reviewers, has deleted posts by activists and journalists in disputed territories such as Palestine, Kashmir, Crimea and Western Sahara.

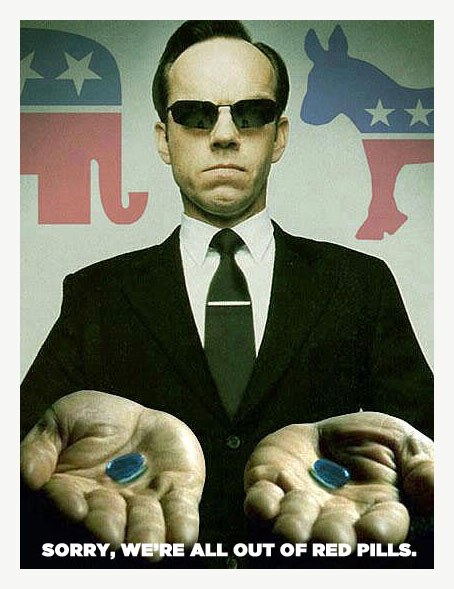

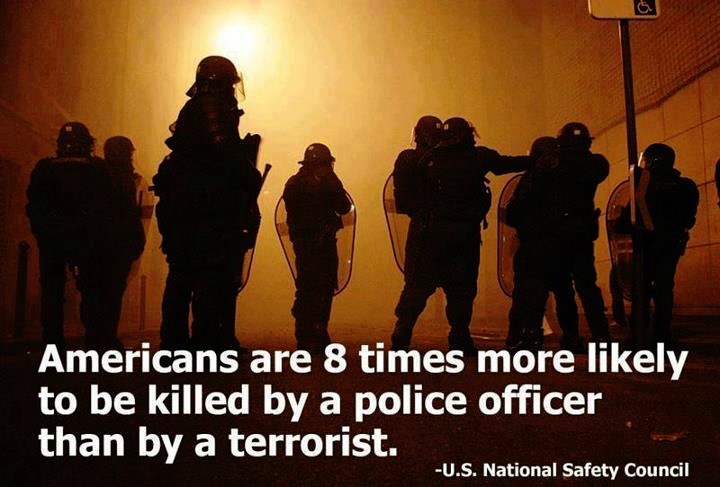

One document trains content reviewers on how to apply the company’s global hate speech algorithm. The slide identifies three groups: female drivers, black children and white men. It asks: Which group is protected from hate speech? The correct answer: white men.

The reason is that Facebook deletes curses, slurs, calls for violence and several other types of attacks only when they are directed at “protected categories”—based on race, sex, gender identity, religious affiliation, national origin, ethnicity, sexual orientation and serious disability/disease. It gives users broader latitude when they write about “subsets” of protected categories. White men are considered a group because both traits are protected, while female drivers and black children, like radicalized Muslims, are subsets, because one of their characteristics is not protected. (The exact rules are in the slide show below.)

The Facebook Rules

Facebook has used these rules to train its “content reviewers” to decide whether to delete or allow posts. Facebook says the exact wording of its rules may have changed slightly in more recent versions. ProPublica recreated the slides.

Behind this seemingly arcane distinction lies a broader philosophy. Unlike American law, which permits preferences such as affirmative action for racial minorities and women for the sake of diversity or redressing discrimination, Facebook’s algorithm is designed to defend all races and genders equally.

“Sadly,” the rules are “incorporating this color-blindness idea which is not in the spirit of why we have equal protection,” said Danielle Citron, a law professor and expert on information privacy at the University of Maryland. This approach, she added, will “protect the people who least need it and take it away from those who really need it.” [MORE]

From [NY Times] At the age of 21, David Pakman started a little Massachusetts community radio talk program. While the young broadcaster got his show syndicated on a few public radio stations, it was a YouTube channel he began in 2009, “The David Pakman Show,” that opened up his progressive political commentary to a whole new digital audience. The show has since amassed 353,000 subscribers, and roughly half of its revenue now comes from the ads that play before his videos. He earns enough to produce the show full time and pay a lean staff.

Or, at least, he used to. Last month YouTube announced abrupt, vague changes to its automated processes for placing ads across the platform. Ads on Mr. Pakman’s YouTube channel evaporated, dropping to as little as 6 cents a day, and forcing him to set up a crowdfunding page to help cover $20,000 a month in operating costs.

“This is an existential threat to the show,” Mr. Pakman said. “We need that money.”

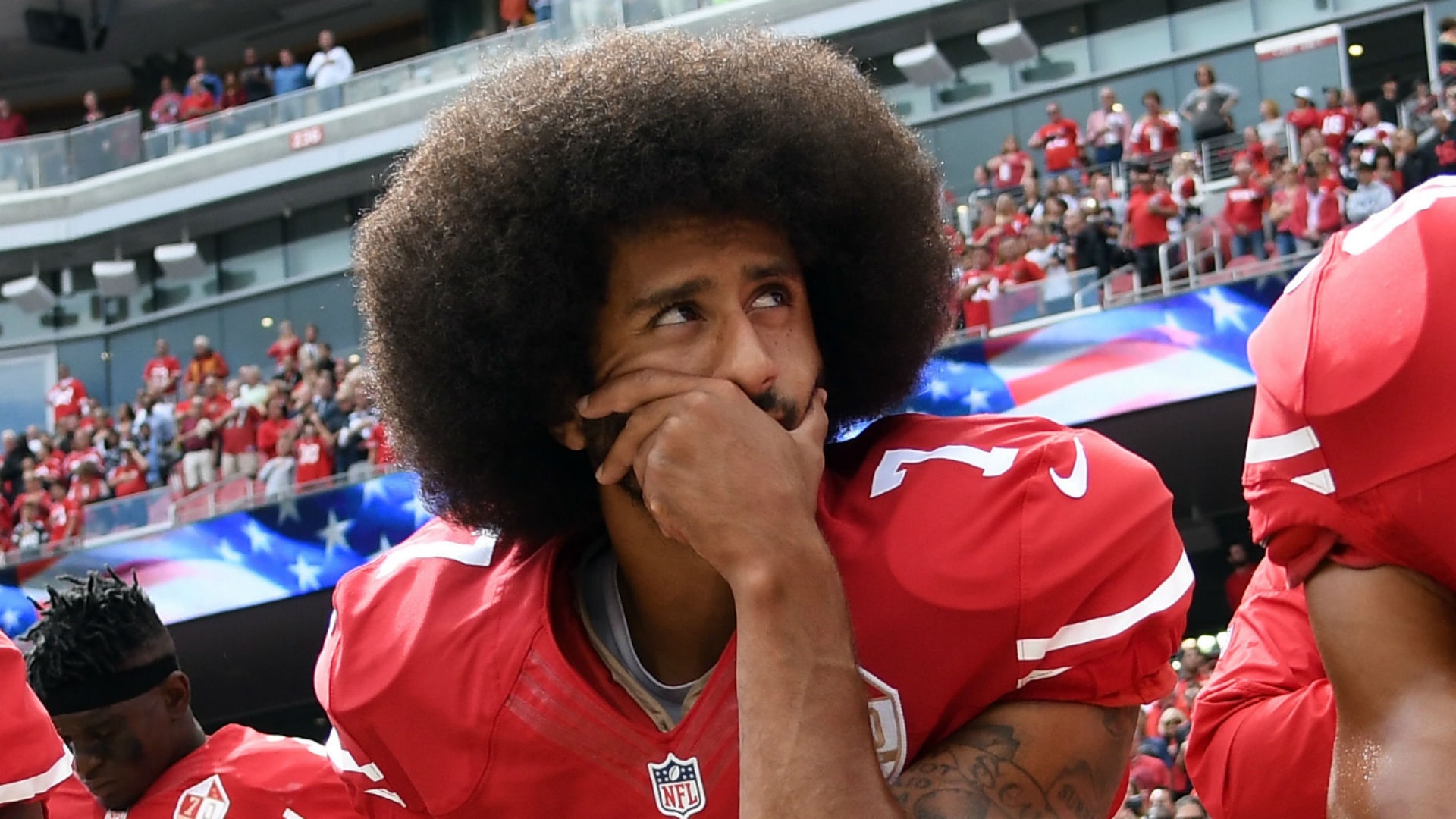

Since its 2005 debut with the slogan “Broadcast Yourself,” YouTube has positioned itself as a place where any people with camera phones can make a career of their creativity and thrive free of the grip of corporate media gatekeepers. It also has given a space to a plethora of alternative Black channels that have flourished on YouTube, such as Moor Info, Victim of RWS, Tariq Live, Shen Pe Uts Taa-Neter and Black Magik363. Said shows are outside the white ideology process and a sharp contrast to mainstream Black media, they attempt to confront Black self definition and the racist system we are dealing with. However, similar to mainstream Black media [white media in Black face] these alt Black channels are also dependent on white elite financing and underwritten by white elite advertising revenues provided by Google/YouTube.

But in order to share in the advertising wealth a user base of more than a billion can provide, independent producers must satisfy the demands of YouTube’s unfeeling, opaque and shifting algorithms.

The architecture of the internet has tremendous influence over what is made, and what is seen; algorithms influence what content spreads further on Facebook and turns up on top of Google searches. YouTube’s process for mechanically pulling ads from videos is particularly concerning, because it takes aim at whole topics of conversation that could be perceived as potentially offensive to advertisers, and because it so often misfires. It risks suppressing political commentary and jokes. It puts the wild, independent internet in danger of becoming more boring than TV.

YouTube’s most serious ad change yet came in the wake of reports from The Times of London and The Wall Street Journal that ads were appearing on YouTube videos that espoused extremism and hate speech. When major advertisers like AT&T and Johnson & Johnson withdrew their spots, YouTube announced that it would try to make the site more palatable to advertisers by “taking a tougher stance on hateful, offensive and derogatory content.”

But according to YouTube creators, that shift has also punished video makers who bear no resemblance to terrorist sympathizers and racists. YouTube’s comedians, political commentators and experts on subjects from military arms to video games have reported being squeezed by the ad shake-up — often in videos they’ve posted to YouTube. On the site, it’s known as “the adpocalypse.”

The topics of Mr. Pakman’s videos are no more controversial than the programming typically found on CNN or the local news. In fact, because his show is also broadcast over the radio, it adheres strictly to FCC content rules. But the show takes pride in its independence from corporate ownership. “I have no boss above me,” Mr. Pakman said, and because of YouTube’s automated ad systems, “I’ve never had any contact with advertisers, so it’s impossible for me to ‘sell out’ to satisfy them.”

Instead, he’s subject to the whims of the algorithm. To rein in its sprawling video empire — 400 hours of video are uploaded to the platform every minute — YouTube uses machine learning systems that can’t always discern context, or distinguish commentary or humor from hate speech. That limitation means that YouTube routinely pulls ads from content deemed “not advertiser-friendly.” That includes depictions of violence or drug use, “sexual humor” and “controversial or sensitive subjects,” including war and natural disasters. YouTube has previously blocked ads on Mr. Pakman’s news videos referring to ISIS church bombings and the assassination of a Russian diplomat. YouTube creators can appeal the decision to a human who will watch the video, but the recent changes have upended the usual processes, often leaving creators without an official channel for appeal.

Last month’s changes signaled that YouTube’s ad rules had become even stricter and less clear. YouTube announced that it was leading advertisers toward “content that meets a higher level of brand safety.” YouTube gave creators no further hints on what that means, just issuing a blanket message telling them they may experience “fluctuations” in revenue as the new systems are “fine-tuned.”

“It’s getting so bad that you can’t even speak your mind or be honest without fear of losing money and being not ‘brand-friendly,’” said Ethan Klein, the creator of h3h3. “YouTube is on the fast track to becoming Disney vloggers: beautiful young people that wouldn’t say anything controversial and are always happy.” [MORE]

YouTube will no longer allow creators to make money until they reach 10,000 views. From [TheVerge] Five years ago, YouTube opened their partner program to everyone. This was a really big deal: it meant anyone could sign up for the service, start uploading videos, and immediately begin making money. This model helped YouTube grow into the web’s biggest video platform, but it has also led to some problems. People were creating accounts that uploaded content owned by other people, sometimes big record labels or movie studios, sometimes other popular YouTube creators.

In an effort to combat these bad actors, YouTube has announced a change to its partner program today. From now on, creators won’t be able to turn on monetization until they hit 10,000 lifetime views on their channel. YouTube believes that this threshold will give them a chance to gather enough information on a channel to know if it’s legit. And it won’t be so high as to discourage new independent creators from signing up for the service.

“In a few weeks, we’ll also be adding a review process for new creators who apply to be in the YouTube Partner Program. After a creator hits 10k lifetime views on their channel, we’ll review their activity against our policies,” wrote Ariel Bardin, YouTube’s VP of product management, in a blog post published today. “If everything looks good, we’ll bring this channel into YPP and begin serving ads against their content. Together these new thresholds will help ensure revenue only flows to creators who are playing by the rules.”

Of course, along with protecting the creators on its service whose videos are being re-uploaded by scam artists, these new rules may help YouTube keep offensive videos away from the brands that spend money marketing on their platform. This has been a big problem for YouTube in recent weeks. “This new threshold gives us enough information to determine the validity of a channel,” wrote Bardin. “It also allows us to confirm if a channel is following our community guidelines and advertiser policies.” [MORE]

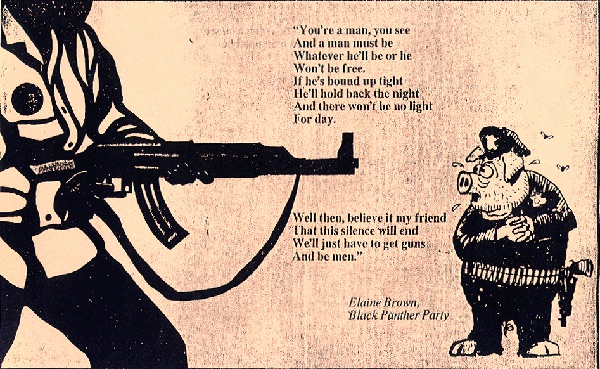

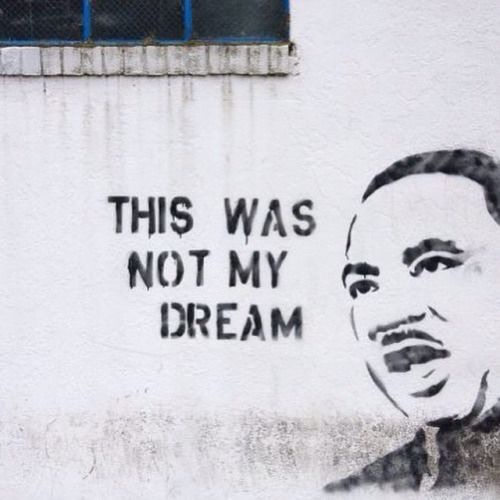

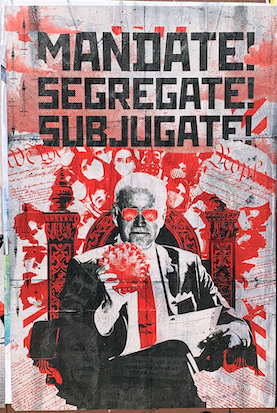

“It’s as though terrorism can’t be white." From [theIntercept] PUBLICLY TRADED COMPANIES don’t typically need to issue statements saying that they do not support terrorism. But Facebook is no ordinary company; its sheer scale means it is credited as a force capable of swaying elections, commerce, and, yes, violent radicalization. In June, the social network published an article outlining its counterterrorism policy, stating unequivocally that “There’s no place on Facebook for terrorism.” Bad news for foreign plotters and jihadis, maybe, but what about Americans who want violence in America?

In its post, Facebook said it will use a combination of artificial intelligence-enabled scanning and “human expertise” to “keep terrorist content off Facebook, something we have not talked about publicly before.” The detailed article takes what seems to be a zero-tolerance stance on terrorism-related content:

We remove terrorists and posts that support terrorism whenever we become aware of them. When we receive reports of potential terrorism posts, we review those reports urgently and with scrutiny. And in the rare cases when we uncover evidence of imminent harm, we promptly inform authorities. Although academic research finds that the radicalization of members of groups like ISIS and Al Qaeda primarily occurs offline, we know that the internet does play a role — and we don’t want Facebook to be used for any terrorist activity whatsoever.

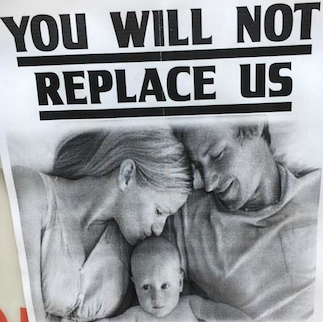

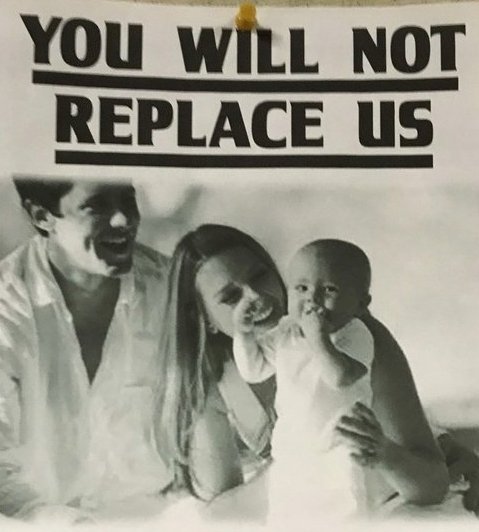

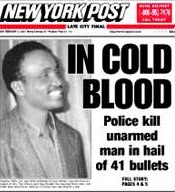

Keeping the (to put it mildly) highly motivated membership of ISIS and Al Qaeda off any site is no small feat; replacing a banned account or deleted post with a new one is a cinch. But if Facebook is serious about refusing violent radicals a seat at the table, it’s only doing half its job, as the site remains a cozy home for domestic — let’s be frank: white — extremists in the United States, whose views and hopes are often no less heinous and gory than those of the Islamic State.

Asked whether the company is as committed to combating white extremists as jihadists, a Facebook spokersperson reiterated that the company’s policy was to refuse a presence to any person or group who supports a violent agenda. When asked about specific entities like the Bundy family, the spokesperson replied, “I can’t speak to the specific groups you cited, but, again, if a group has engaged in acts of violence, we don’t want to give them a voice on Facebook.” So what’s violence, then? “Does Facebook,” I asked, “consider the armed takeover of a federal building to be an act of violence?” The company’s reply: “I don’t have an answer on that.” Brian Fishman, a co-author of the Facebook company post on terrorism, did not answer a request for comment.

Daryl Johnson is a former Homeland Security analyst who spent years studying domestic threats to the United States. Johnson told The Intercept that although “not all extremists are violent,” treating domestic threats as something distinct from the likes of ISIS for policy purposes “on the surface makes sense, but if you start comparing and contrasting, it doesn’t.” Domestic extremists “are using social media sites as platforms to put forth their extreme ideas and a violent agenda, enabling people to recruit and spread” their ideology — an ideology that Heidi Beirich of the Southern Poverty Law Center said is as dangerous as any threat from the Middle East. “It’s as though terrorism can’t be white,” Beirich told The Intercept after reviewing the Facebook post. “They’re willing to go hardcore against ISIS and Al Qaeda, but where’s the response to white supremacism and its role in domestic terrorism or anti-government crazies?” To Beirich, “What this article is saying is how we counter Islamic terrorism,” not terrorism per se:

My position is this is outrageous. Terrorism from the Dylan Roofs of the world is as much of a problem here in the United States as it is from the Tsarnaev brothers. The bodies stacked on both sides is about the same since 9/11. I don’t understand why they can’t see that. It’s worrying to me because it plays into the narrative as though all terrorism is coming from radical Islam, which is a false narrative. This adds to that, and it takes our eye off the ball in terms of where terrorism comes from … A large chunk of our domestic terrorism is committed by folks with beliefs like those at the Bundy Ranch or Malheur occupation.

Without further comment from Facebook, one can only speculate about why ISIS gets top billing and Bundy supporters do not — but little speculation is needed. A beheading video or pipe bomb instruction image are no-brainers for content moderators, while posts about armed anti-government sedition are perhaps murkier (especially if Facebook is going to let algorithms do the heavy lifting). Labeling right-wing extremism as terrorism would also likely cause a public relations migraine Facebook doesn’t care for, particularly after reports of how it filters out bogus right-wing news sources have already tarnished its image on the right. [MORE]